The trace viewer is available across , , and Human review. Select any row to open a trace in a panel on the right side of your screen, showing all spans with detailed information about inputs, outputs, timing, and metadata. Use the button to expand the trace to fullscreen or the button to open it in a separate page.Documentation Index

Fetch the complete documentation index at: https://braintrust.dev/docs/llms.txt

Use this file to discover all available pages before exploring further.

Anatomy of a trace

A trace represents one end-to-end execution — a single request or interaction in logs, or a single test case run in experiments. Every trace contains one or more spans, each representing a unit of work with a start and end time. Spans nest inside each other to reflect your application’s execution flow. Braintrust assigns a type to each span:| Span type | What it represents |

|---|---|

eval | The root span for an evaluation run, wrapping a task span for your application code. One per test case — contains the input, expected output, and all child spans. |

task | A unit of application logic — a workflow, pipeline step, or named operation. In logs, the root span is always a task span. Multiple task spans can appear in a single trace. |

llm | A single call to an LLM. Shows the model, messages, parameters, token usage, and cost. |

function | A named block of application logic — retrieval, formatting, routing, etc. |

tool | A tool call made by the model — an external API, code execution, database query, etc. |

score | The result of a scorer — online (in logs) or offline (in evaluations). Contains the score value, scorer name, and for LLM-as-a-judge scorers, the judge’s reasoning. |

View as a hierarchy

While viewing a trace, select Trace to view the trace as a nested hierarchy. Each span is indented under its parent, making it useful for understanding the logical structure of your application: which function called which, how tool calls nest under LLM calls, and how sub-tasks relate to the root task. Expand and collapse branches to navigate the call graph. Each span row shows inline metrics. By default, these are duration, total tokens, and estimated LLM cost. Cost is propagated from child spans to parent spans, making it easy to see which parts of a multi-step workflow are consuming the most of your cost budget. Use > Display metric types to toggle which metrics appear:- Duration (on by default)

- Total tokens (on by default)

- Time to first token

- Estimated LLM cost (on by default)

View as a timeline

While viewing a trace, select Timeline to understand execution flow and token efficiency:- Timeline bars — Each bar represents a span scaled by a metric of your choice and color-coded by span type.

- Token distribution overview — Breaks down LLM span token usage by type (uncached input, cached read, cache write, and output) and shows cache hit rate per span, making it easy to spot where caching is and isn’t working.

- Duration (default) — Bar width represents wall-clock time

- Total tokens — Bar width represents total token usage, useful for identifying spans that consume the most context

- Prompt tokens — Bar width represents input token usage

- Completion tokens — Bar width represents output token usage

- Estimated cost — Bar width represents the estimated cost of each span

- Enable Maintain hierarchy to preserve parent-child relationships: Parent spans are kept even if they don’t match the filter, as long as they have matching descendants.

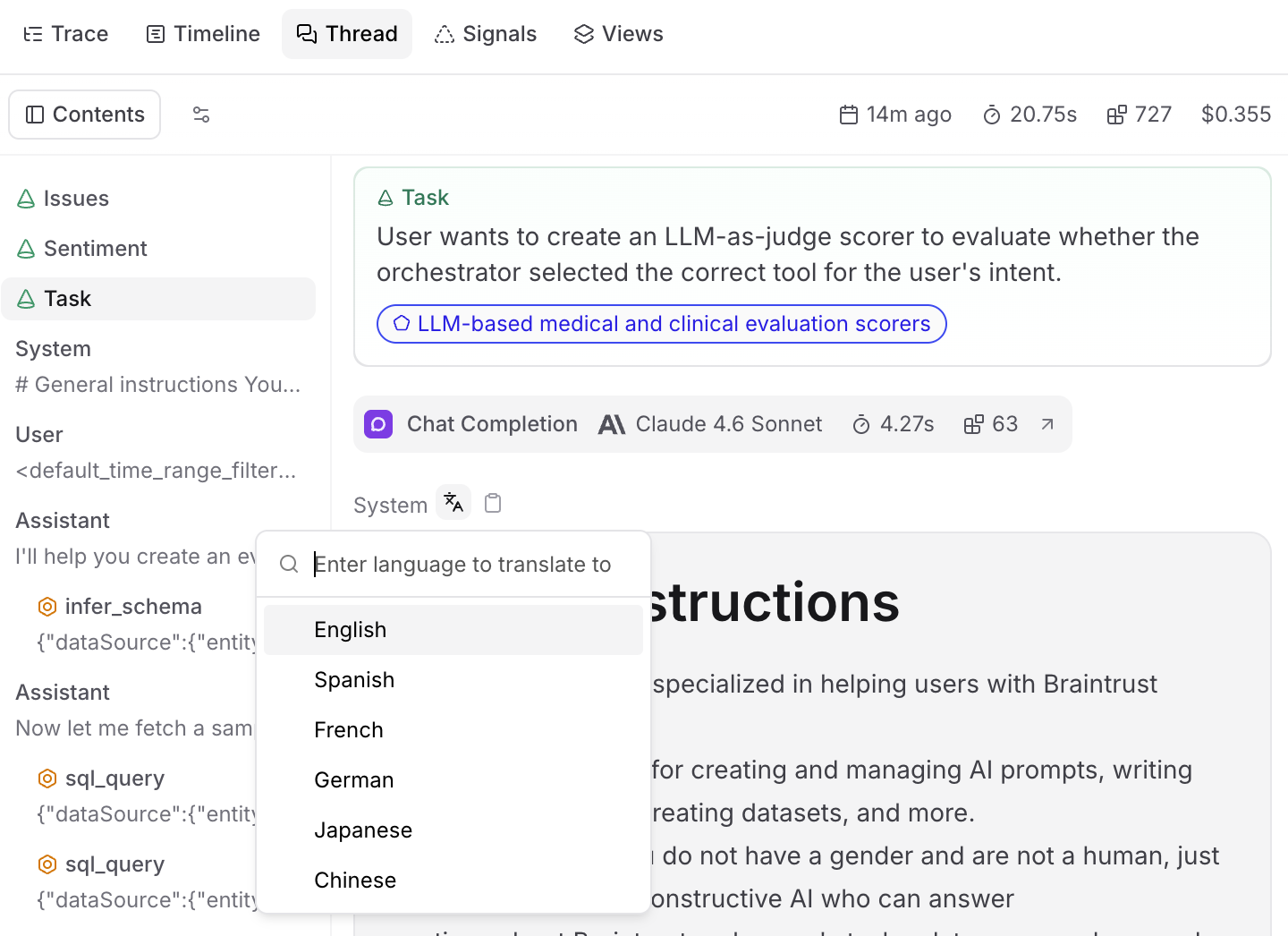

View as a conversation

While viewing a trace, select Thread to view the trace as a conversation thread. This view displays messages, tool calls, and scores in chronological order, stripping away the hierarchy to show what was said and in what order. Use it for reading agent conversations and understanding the narrative flow of multi-turn interactions.- By default, the thread view renders raw span data. Select to apply a preprocessor — choose the built-in Thread preprocessor to format the trace as a readable conversation, or select a custom preprocessor to control exactly how messages are rendered. When topics are enabled, topic tags and facet outputs appear at the top of the thread view as well.

- Use Find or press

Cmd/Ctrl+Fto search within the thread view and quickly locate specific content such as message text and score rationale. Matches are highlighted in-place using your browser’s native highlighting. This search is scoped to the thread view content — use the trace view’s search feature to search across spans.

Translate message content

Select on a message bubble in Thread view, or open the context menu on any string field in Tree view, and pick a target language. Choose from English, Spanish, French, German, Japanese, and Chinese, or type any language name. The translated text appears inline beneath the message, with markdown and code formatting preserved. Translations are not saved to the span. They appear inline for the current session only.

Test and apply signals

While viewing a trace, select Signals to test topic facets and scorers on the current trace.- Topic facets: Test how preprocessors transform the trace data, test what summaries prompts extract, or apply the complete facet (preprocessor + prompt) to see the end-to-end result.

- Scorers: Test scorers, apply them to the trace, or configure an automation rule for online scoring.

Create custom trace views

While viewing a trace, select Views to create custom visualizations using natural language. Describe how you want to view your trace data and Loop will generate the code. For example:- “Create a view that renders a list of all tools available in this trace and their outputs”

- “Render the video url from the trace’s metadata field and show simple thumbs up/down buttons”

Self-hosted deployments: If you restrict outbound access, allowlist

https://www.braintrustsandbox.dev to enable custom views. This domain hosts the sandboxed iframe that securely renders custom view code.Search within a trace

While viewing a trace, use Find or pressCmd/Ctrl+F to search for content within the trace. A scope dropdown lets you choose where to search:

- This span — Search only within the currently selected span.

- Full trace — Search across all spans in the trace.

Trace search finds content within the currently open trace. To search across all traces in your project, use filters or deep search.

Change span data format

When viewing a trace, each span field (input, output, metadata, etc.) displays data in a specific format. Change how a field displays by selecting the view mode dropdown in the field’s header. Available views:- Pretty - Parses objects deeply and renders values as Markdown (optimized for readability)

- JSON - JSON highlighting and folding

- YAML - YAML highlighting and folding

- Tree - Hierarchical tree view for nested data structures

- LLM - Formatted AI messages and tool calls with Markdown

- LLM Raw - Unformatted AI messages and tool calls

- HTML - Rendered HTML content

View raw trace data

When viewing a trace, select a span and then select the button in the span’s header to view the complete JSON representation. The raw data view shows all fields including metadata, inputs, outputs, and internal properties that may not be visible in other views. The raw data view has two tabs:- This span - Shows the complete JSON for the selected span only

- Full trace - Shows the complete JSON for the entire trace

- Inspect the complete span structure for debugging

- Find specific fields in large or deeply nested spans

- Verify exact values and data types

- Export or copy the full span for reproduction

Navigate to trace origins

To trace the origin of a trace that comes from a prompt or dataset:- Click in the trace header.

- Choose Go to origin prompt or Go to origin dataset.

- Trace issues back to the original prompt or dataset

- See which dataset example led to a result

- Move efficiently between trace analysis and refining prompts or datasets

Share traces

When viewing a trace:- Select Share.

- Choose whether to make the trace Private or Public. Making a trace public grants access only to that trace.

- Click Copy link and share it with others.

Re-run a prompt

When viewing a prompt span in a trace:- Select Run.

- In the Run prompt dialog, make changes as necessary.

- Select Test to see the output.

Next steps

- Score online to apply automated scoring to production traces

- Add to a dataset to curate interesting traces into ground truth

- Filter and search to find specific traces across logs

- Create dashboards to monitor metrics at scale

- Analyze with Loop to query across traces with natural language